I think that most people can relate. Over time my career and role shifted. Less time building things directly, more time designing systems, reviewing architectures, and guiding teams. Important work, but further away from the joy of experimenting and writing code.

During my Christmas break something changed. Tools like Claude Code have fundamentally changed. They are so good now. They massively altered my development feedback loop. Instead of either doing nothing or spending hours researching libraries, debugging boilerplate, or scaffolding projects, I now move straight to experimentation.

And the result surprised me. Suddenly I’m writing small services in Go, Python, or Node.js in minutes instead of hours. Not because the models write perfect code, but because they drastically lower the cost of exploration. And let’s be honest. I don’t use or know these languages that well. Exploration is where ideas happen.

The New Development Loop

The traditional development loop often looked like this: 1. Think about a feature 2. Discuss it 3. Getting it approved 4. Find time on the backlog 5. Build it by searching documentation, writing code, debut, iterate. You know, work.

Now it increasingly looks like this: 1. Describe an idea 2. Generate architecture options 3. Implement a prototype 4. Ask the for critique. I iterate rapidly. The model becomes less of a code generator and more of a thinking partner. What surprised me most is how useful this is not just for implementation, but for architecture thinking.

Agents as a Virtual Engineering Team

- One experiment I’ve been running is simulating multiple AI agents acting as a team. Either by using GSD or BMAD, it is instead of asking a single model for a solution, I assign roles: Product manager – Software architect – Security engineer – Backend developer. They discuss functionality, challenge assumptions, and refine requirements.

- They even end with: was a great meeting. I am running them in a Docker container with a Telegram hook. They are one message away.

The outcome often resembles a real design session. Trade-offs surface early, and architectures evolve through discussion rather than a single prompt. It feels less like using a tool and more like running a virtual architecture workshop.

Reasoning vs Determinism

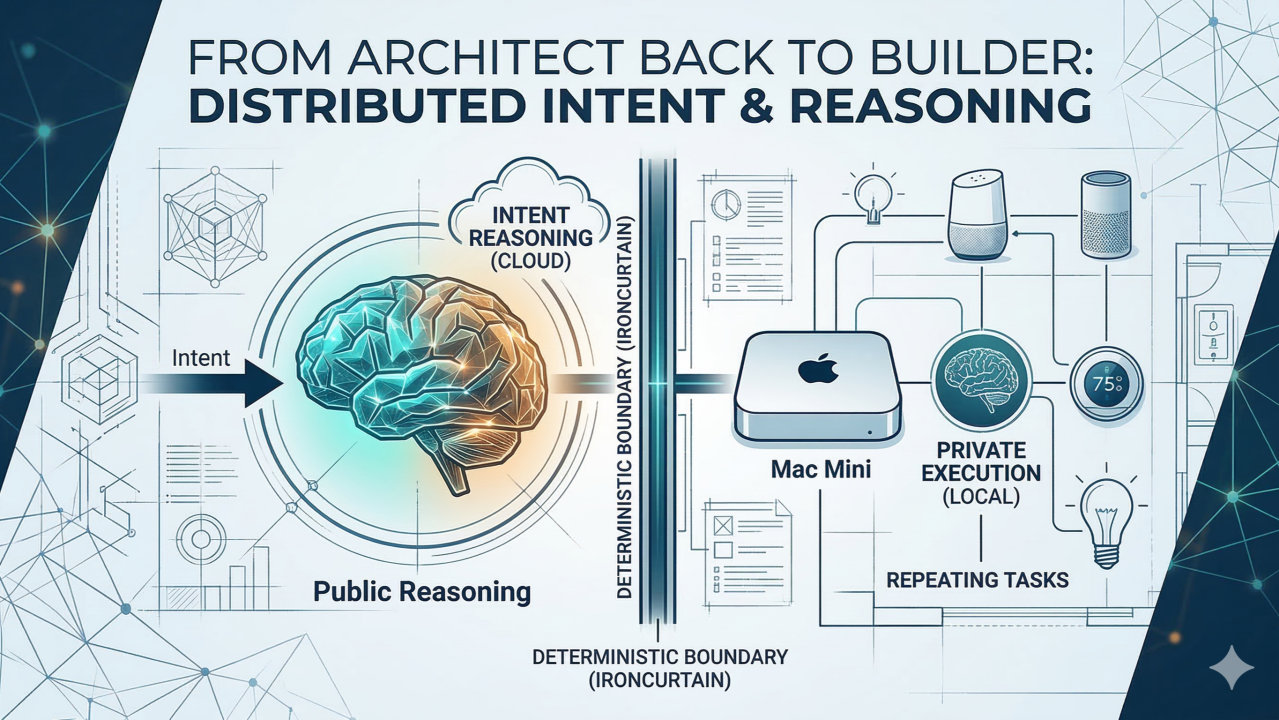

Working with LLMs also forces an interesting architectural question: Where do I want reasoning, and where do I want repeatable determinism? Not every step should involve a model call. And thus tokens. A pattern that works well, for me :

Use LLMs for: interpreting natural language, formalizing a plan, create tasks Use deterministic code for: repeatable outcomes, integrations, automation execution, reliability

The result is a hybrid approah where the model acts as a discovery tool, the reasoning leads to understanding requirements, exploration of what I actually want. And the resulting code acts as the repeatable executor.

Local Models vs Cloud Models

Another dimension is where the intelligence runs. Public models like ChatGPT and Gemini are incredibly capable. But running models locally opens up interesting possibilities. Recently I started experimenting with running the Qwen model locally on my Mac mini. That introduces a different set of trade-offs:

- Local models: full data privacy, no API costs, predictable (higher) latency. Great for OCRing your own letters and analysing your bank statements.

- Cloud models: stronger reasoning, much larger context windows. Constantly improving capabilities, great for experimentation on difficult new projects.

This leads to the next architectural question: Which reasoning should stay local, and which should go to the cloud? Sensitive automation data must stay local, while more complex reasoning can leverage public models.

My Playground: Home Automation

Home automation has become my experimentation playground. With the help the newest release of these AI coding tools I can quickly prototype services that connect sensors, interpret events, generate automations and integrate different platforms.

Because the models help with scaffolding, building and exploration, the focus shifts from syntax to system design. Things that once took days can now be prototyped in an hour. Also it so much fun building your own agentic factory. Half baked, hare brained schemes go in, get discussed, get turned into plans that then get build by a swarm of sub agents. Some running even locally. The architecture and thinking about how to distribute intent and reasoning while not over engineering skills.md or having to much MCPs and plugins is wonderful. It is fair to say I’m having a blast. My wife and kids… not so much.

Augmented Builders

For developers who moved into architecture roles, AI coding tools offer something unexpected. A path back to building. They don’t replace engineering thinking. They amplify it. They allow you to explore ideas directly in code again, test hypotheses quickly, and prototype systems that would previously have taken far more time. In many ways these tools bring us back to the essence of software development:

Curiosity. Experimentation. Building things that didn’t exist yesterday.

Love to know more how others are using AI coding tools in their architecture or development workflows.

Leave a Reply