I’ve seen more posts then I care for where someone proudly presents a dashboard tracking “tokens consumed” as the team’s primary AI productivity metric. Not bugs fixed. Not features shipped. Not incidents avoided. Tokens. As if buying more plane tickets proves you’re traveling somewhere useful.

It would be funny if it wasn’t setting up some expensive lessons.

Gergely Orosz at The Pragmatic Engineer recently documented what those lessons are starting to look like: Anthropic shipping obvious UX bugs, Amazon seeing “high blast radius” outages from AI-assisted changes, Big Tech companies gaming token usage instead of measuring outcomes. It reads like a warning. Or, if you’ve been around long enough, it reads like a pattern.

Because we’ve been here before.

We are at the bottom of our learning curve

I’m going to say something that probablu feels wrong to some of us: AI is currently slowing most teams down. And that’s completely fine.

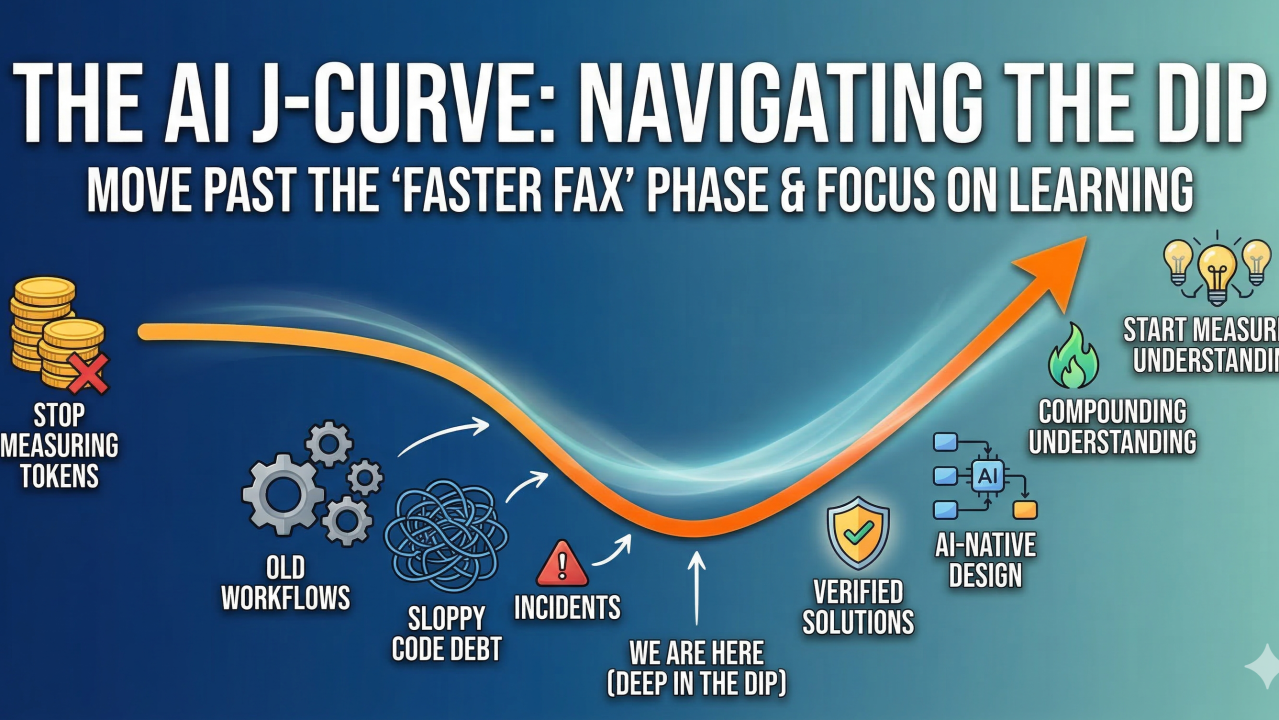

The J-curve is a familiar shape to anyone who has watched an organization absorb a significant capability shift. Performance dips before it rises. Not because the capability is bad, but because you spend the early period making the new thing fit the old world. Then, eventually, you rethink the old world. Right now, we are deep in the dip.

We’re spending more time worrying about, and reviewing “sloppy” AI code than we would have spent writing it ourselves. We’re setup to inherit technical debt that was generated in seconds but will take months to unwind. We’re watching agents make confident, fast decisions in contexts they don’t understand, and then paying the bill when those decisions cascade through a system. (None of this is the AI’s fault. A junior developer making the same confident, fast decisions in a codebase they don’t understand would cause the same problems. We’d just have blamed the onboarding process)

The cost-cutting pressure is making it worse. Organizations aren’t deploying agents because it’s the right tool for the job. They’re deploying them because the board wants a headcount story. That’s not an AI strategy. That’s a hammer looking for nails.

Don’t just bolt AI onto your current Way of Working

The structural problem is that we’re asking AI to fit into workflows, codebases, and decision loops that were never designed for it.

Think about what happened when enterprises first adopted the internet. The companies that actually succeeded didn’t just digitize the paper memo. They asked what their business looked like when communication was instantaneous, and built something new from that answer. The ones who just sent faster faxes went nowhere.

We see that pattern everywhere: sail ship, steam boat, container boat. It’s not about the propulsion technology, but what are you as a company.

We’re in the faster-fax phase. We’ve strapped AI onto the sprint cycle, the legacy code, and our Jira board, then measured token consumption to prove we’re innovating.

And most people don’t yet know how to work with AI well enough for this to change. We barely mastered how to write a useful Google search query (really watch someone struggle with one sometime). Someone even made LMGTFY (Let Me Google That For You). Now we’re expecting those same teams to design effective agentic workflows. Overnight.

The pendulum is going to swing

It always does. After a few more high-profile incidents, there will be a retreat. “Humans in the loop for everything.” “We tried the AI thing.” Then, a year later, the pressure builds again and we overcommit in the other direction.

That’s the shape of the J-curve. It’s not a smooth line down and then up. It’s messy, political, and driven as much by incident timelines as by actual learning.

The only thing that shortens the dip is focusing on the learning rather than the metrics.

Stop measuring tokens. Start measuring understanding. One of those things compounds. The other just burns.

Leave a Reply